[Independent research between January 2021 – August 2023]

Context: AA Second Year (architectural design) extended as independent ML research

Disciplines: ML System Design · Spatial Translation · Gamification · Human-Computer Interaction

Stack: SVM · TF-IDF · CNN · Python · MATLAB · AR

A three-phase machine learning system that translates qualitative human experience into physical architecture and a gamified maze that lets people feel that translation as they navigate it.

The Question

Corporate architecture firms have a culture problem and nobody talks about it honestly. Long hours, ambiguous mentorship, doors that lead nowhere, steep learning curves with no handrail. What if you could make someone feel that experience, rather than just read about it?

The project started as an architecture brief: design a gamified workplace simulation for architecture students. It became something more specific: a system that encodes 150 employees’ qualitative experiences into a decision tree, translates that decision tree into a physical maze, and then uses machine learning to regenerate the maze every time new experiences are added.

Phase 0: Research

150 interviews with architecture students and professionals. The same patterns surfaced across different firms, different countries, different seniority levels:

- Uncertainty without guidance (“navigating without a handrail”)

- Overtime framed as opportunity (“a steep ramp, no ceiling in sight”)

- Decisions made above you that close doors you didn’t know were open (“an unopenable door”)

- Rare moments of mentorship that change everything (“a clear corridor, suddenly”)

The research conclusion: people describe professional experience in spatial language. They already think of careers as mazes. This project made that literal through a simulation that turned their qualitative experiences into a spatial design.

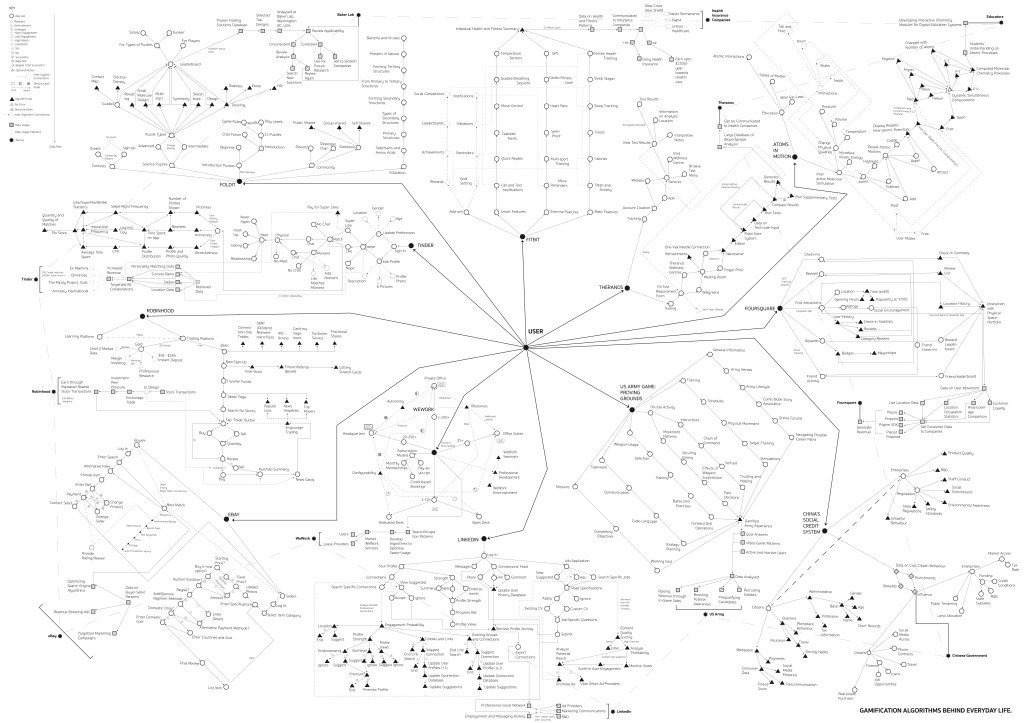

The first design problem: platforms like LinkedIn already gamify job-seeking but the game is rigged. The player’s data feeds the platform; the platform uses it to serve the next employer.

The Spatial Translation System

Four spatial categories were defined to map qualitative experience types to architectural elements. Each category has three intensity levels:

| Experience Type | Spatial Category | Intensity 0 | Intensity 1 | Intensity 2 |

| Pressure, Deadlines | Stairs | 5 steps | 25 steps | 50 steps |

| Task difficulty | Ramps | 10° | 35° | 60° |

| Access, Decisions | Doors | Standard | Puzzled | Unopenable |

| Advice | Corridors | Single | Double | Obstacled |

Table 1: Decisions and their subsequent and spatial translations

This is the translation grammar. Every qualitative sentence from an interview becomes a spatial specification. “Negotiating tight project deadlines” → Puzzle Door. “Working 5 hours overtime on Friday” → 60° Ramp. “Getting a paid taxi home at 1am” → 5 Steps.

Phase 1: ML Model (Text to Space)

A TF-IDF vectoriser + SVM classifier (linear kernel) trained on 100 labelled interview datasets:

- Input: raw qualitative text from a new employee interview

- Output: spatial category + quantitative factor → architectural specification (CSV)

- The model predicts not just the type of experience but its intensity (number of steps, ramp steepness, door type, etc.)

The detailed model description and code can be found on Github here.

Here is an example of 3 ‘qualitative datasets‘ and their ‘spatial‘ translations, as predicted by the Phase 1 model:

| Input: Qualitative Dataset | Output: Spatial Category | Output: Quantitative Factor | Output: Final Spatial Translation |

|---|---|---|---|

| Negotiating tight project deadlines. | Door | [1] | Puzzle Door |

| Working 5 hours overtime on Friday | Ramp | [2] | 60° Ramp |

| Getting a paid taxi home at 1 am | Stairs | [0] | 5 Steps |

Phase 2: Generative decision tree modification

The decision tree determines which routes through the maze are possible. Phase 2 uses ML predictions from Phase 1 to modify this tree: either adding new branches or modifying existing ones, depending on how frequently each spatial category appears:

- If Spatial Category count ≥ 10: modify an existing branch with the new quantitative factor. The maze gets harder in areas of shared collective experience.

- If Spatial Category count < 10: add a new branch entirely. New territory opens in the maze where fewer people have reported that experience type.

This logic means the maze’s topology is a direct reflection of collective data density: where many people share the same experience, the maze reinforces it. Where experiences diverge, the maze branches.

Therefore, phase 2 successfully integrates spatial translations from phase 1 into the existing decision tree using conditional generative modification.

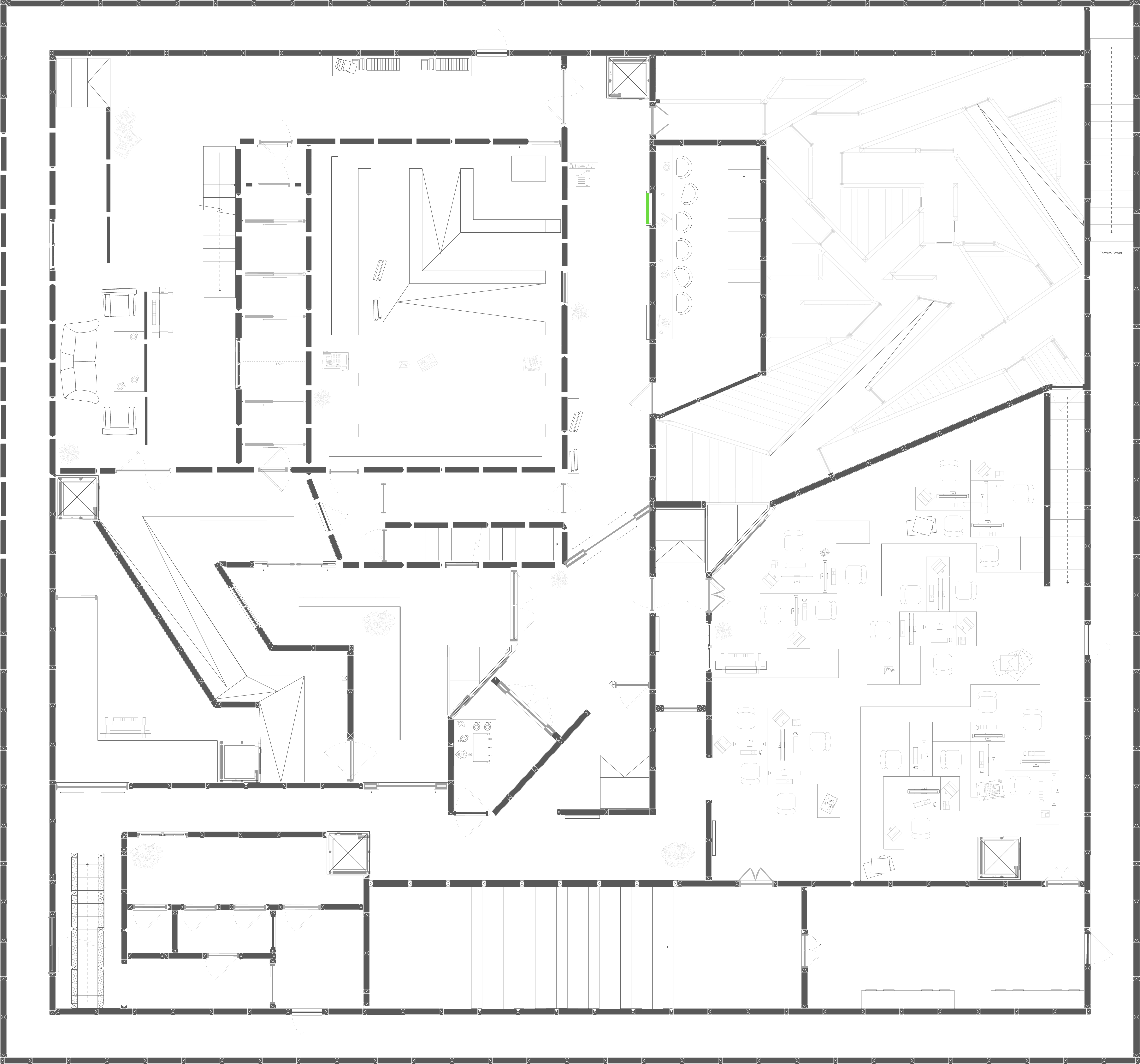

Phase 3: CNN floor plan modification

A Convolutional Neural Network (CNN) trained on an annotated floor plan dataset -recognising stairs, ramps, doors, corridors, and their subcategories- then implementing the decision tree changes directly onto the architectural drawings.

Architectural viability constraints were built into the model’s logic before any modification:

- Doors must be placed between walls on both sides

- Ramp slopes must meet minimum access requirements

- Corridor widths must accommodate two people passing

- New elements cannot block emergency exit routes

These constraints are embedded in the integration logic so the system cannot produce an architecturally impossible output.

Testing and Iteration

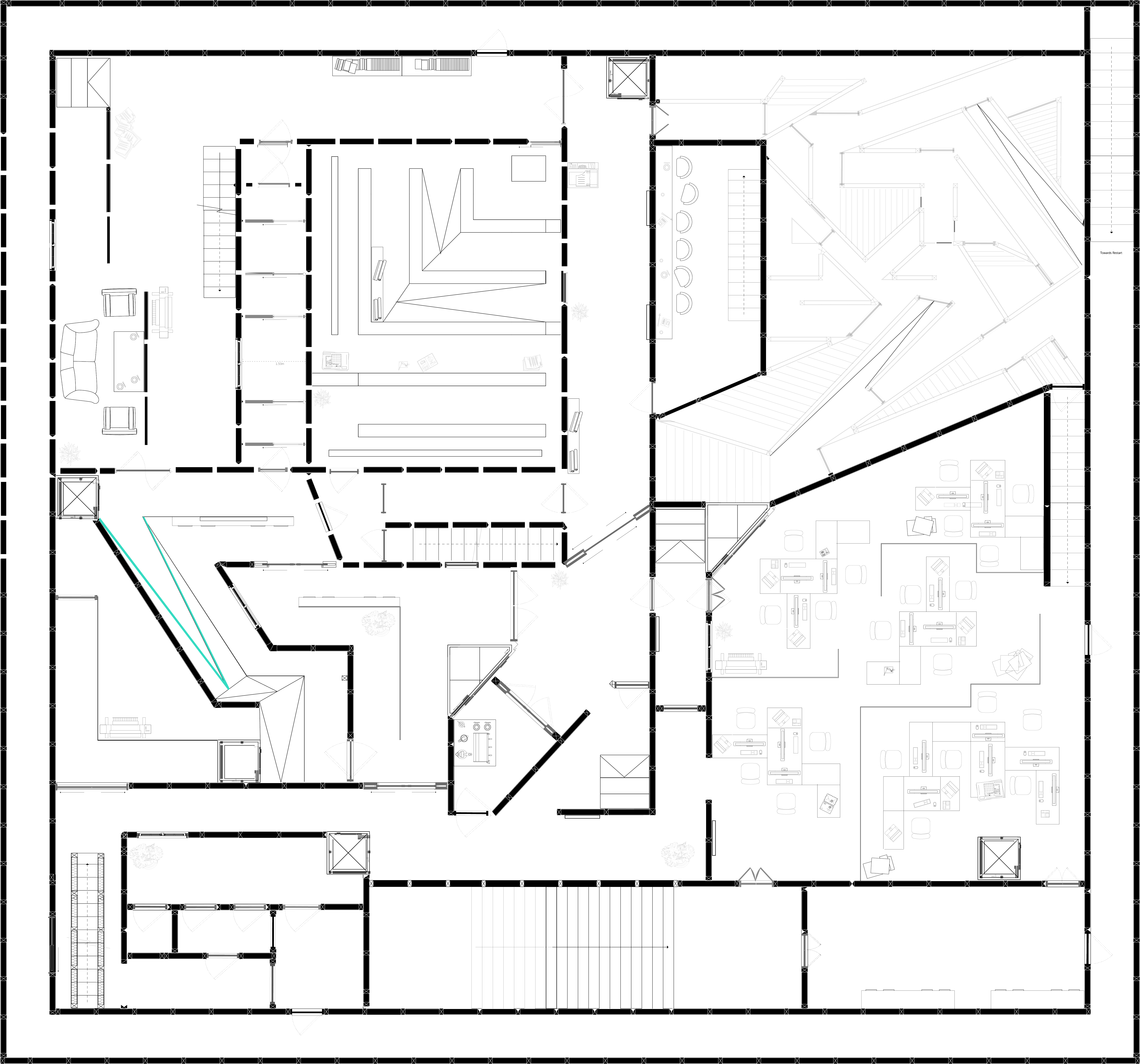

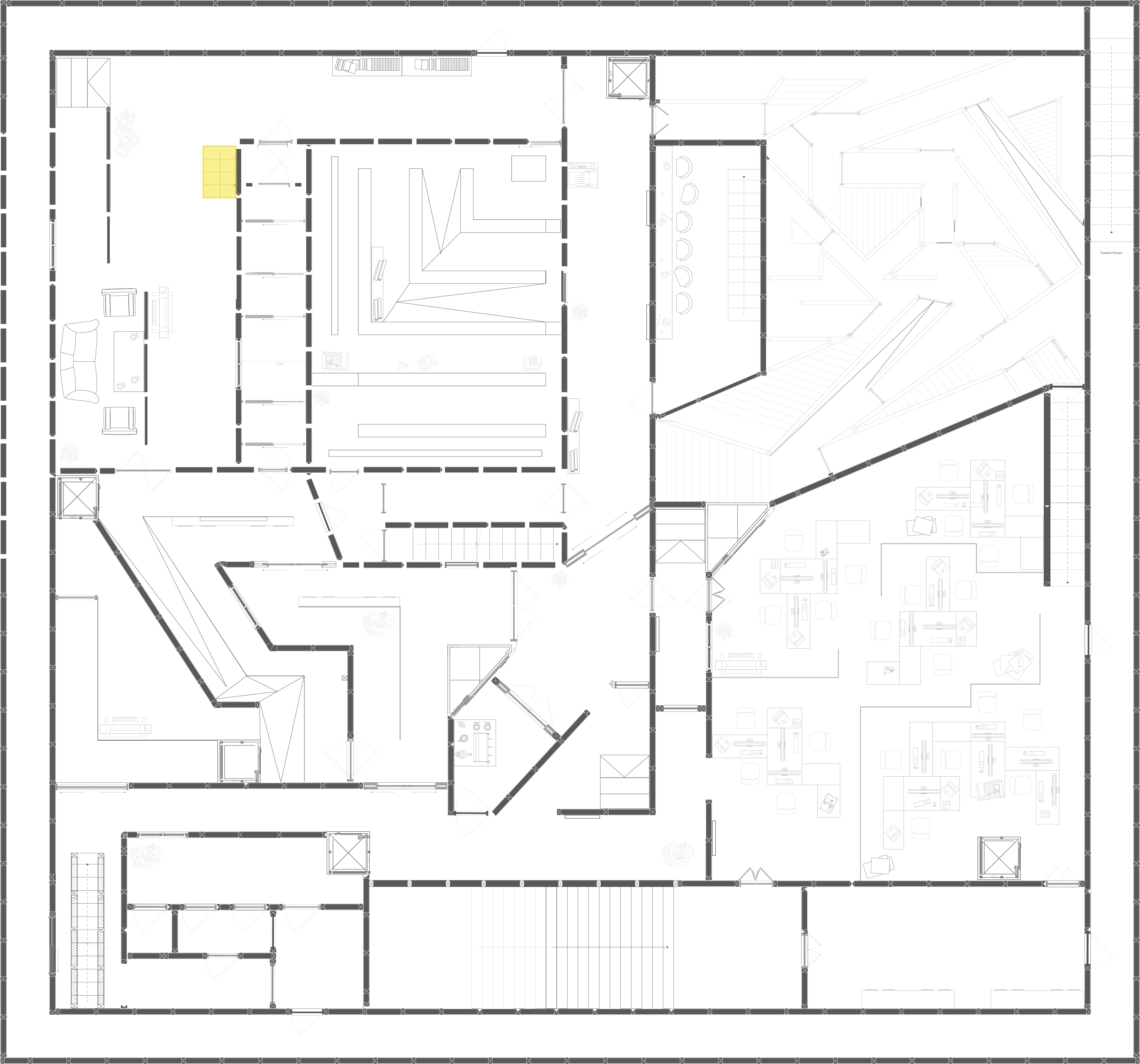

The code includes the iterate_on_integration for implementing iteration logic based on feedback and performance. It helps test the model and decision tree modifications on the same floor plan multiple times. As the model improves on a single layout, the training process is more effective. Images 3-6 show how the model implemented experiences 1-3 from Animation [A].

Experience 1: [New Node] ‘Unopenable Door’.

The model used the green door notation and replicated it onto an empty wall with no doors. The code defines a constraint that a door needs to be between walls on both sides.

Experience 2: [Modified Node] ’60° Ramp’.

When modifying ramps, the model doesn’t quite alter the plan, because the architectural notation for ramps does not mention elevations. Therefore, the notation on plan looks the same for modified ramps.

Experience 3: [Modified Node] ‘5 Steps’.

For Experience 3, the model had to reduce a 15 step stair to a 5 step stair. While it did manage to reduce the length of the stair, it did not replace the correct notation. So the resulting architectural notation for the new 5-step stair does not communicate the direction accurately. However it was commendable that the model identified the right stairway to modify on the plan. For the ramp in experience 2, the model modified a random ramp on the flow.

Learnings:

- Ramp notation on architectural plans doesn’t communicate elevation change: a 10° ramp and a 60° ramp look identical in 2D plan view. The model correctly identified and modified the ramp element but produced an output that was visually indistinguishable from the original. This is a notation limitation, not a model failure but it meant the floor plan output alone was insufficient to communicate the intensity of that experience type. The solution: pair every floor plan modification with a numerical annotation and an elevation section drawing.

- Stair direction notation was inconsistent in early outputs; the model found the right staircase but replaced it with incorrect directional arrows. This required a post-processing validation step: checking that every modified stair element preserved the approach direction before the output was accepted.

Both failures reinforced the same principle: the gap between what a system can output and what a human can use is where the real design work happens.

Rebuilding using AI:

- Replace SVM with an LLM reading full interview transcripts: capturing emotional register, uncertainty level, and relational dynamics, not just categorical classification. A sentence like “my director never remembered my name” maps to something more nuanced than “obstacled corridor.”

- Add a text-to-3D layer: use the modified floor plan as a conditioning input to generate a navigable 3D environment. Players submit their experience, watch the maze shift around them in real time via AR.

- Replace manual architectural constraint validation with a constraint-satisfaction model that can propose valid modifications rather than rejecting invalid ones.

Additional Links:

Github Repository for all model-training source code: Link

Original Architectural Game-Design Project: ‘Spatialized DecisionTree: An Architectural Maze‘.

[Independent project completed under the guidance from Oliviu Ghencu at the Architectural Association, January 2023 – August 2023]