An AI-powered cognitive profiling platform that maps how people think and converts those patterns into task-specific study strategies; built from scratch at Brown University with faculty collaborators from Brown and Harvard.

Context: Product Lead, Brown University (Jun 2025-present)

Collaborators: Cognitive science faculty at Brown and Harvard

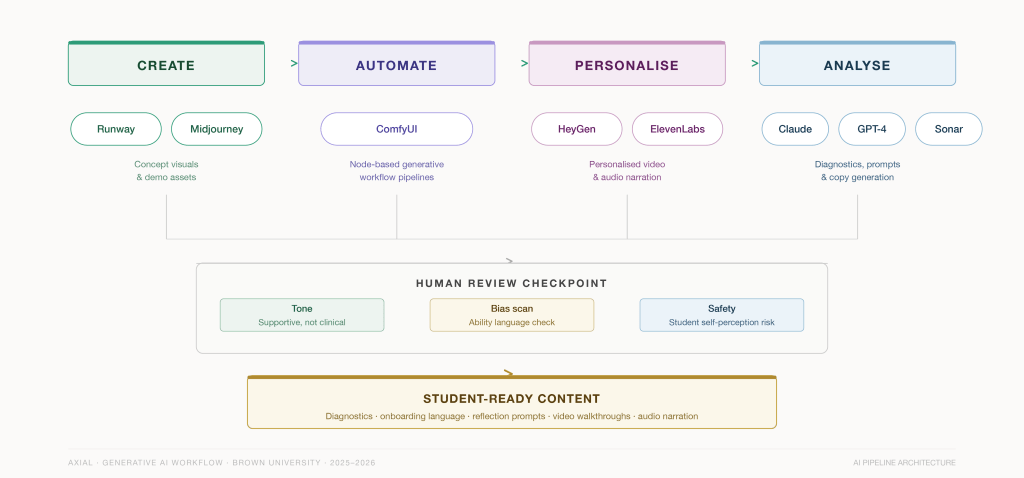

Stack: ComfyUI · Runway · Midjourney · HeyGen · ElevenLabs · Claude · GPT-4 · Sonar · Figma

The Question

Every learning system encodes assumptions about intelligence. If those assumptions are wrong – if the system mistakes a visual-spatial thinker for a slow reader, or a highly empathetic student for an inattentive one – it subtly fails the learner. Maybe not visibly, but intrinsically.

Axial maps how a student thinks – visual-spatial reasoning, working memory, empathy, creativity, language processing, across IQ, EQ, SQ- and translates that map into personalised learning strategies. The goal is not to label students but to make cognition legible to the students themselves.

A working prototype of the system can be found here: Link

The Problem

High-performing students are working hard but studying inefficiently. Not because they lack motivation but because their methods are misaligned with how their brains actually process, retain, and apply information. The result: 76% report working hard without retaining effectively. 72% multitask constantly, despite knowing it hurts. Students describe “swirling between apps,” “doing everything right but still falling behind,” “working all day but nothing sticks.”

Existing tools optimise content access or time management. None explain why a strategy works for one learner and fails for another. Axial reframes this as a method-cognition mismatch instead of a motivation problem.

The System

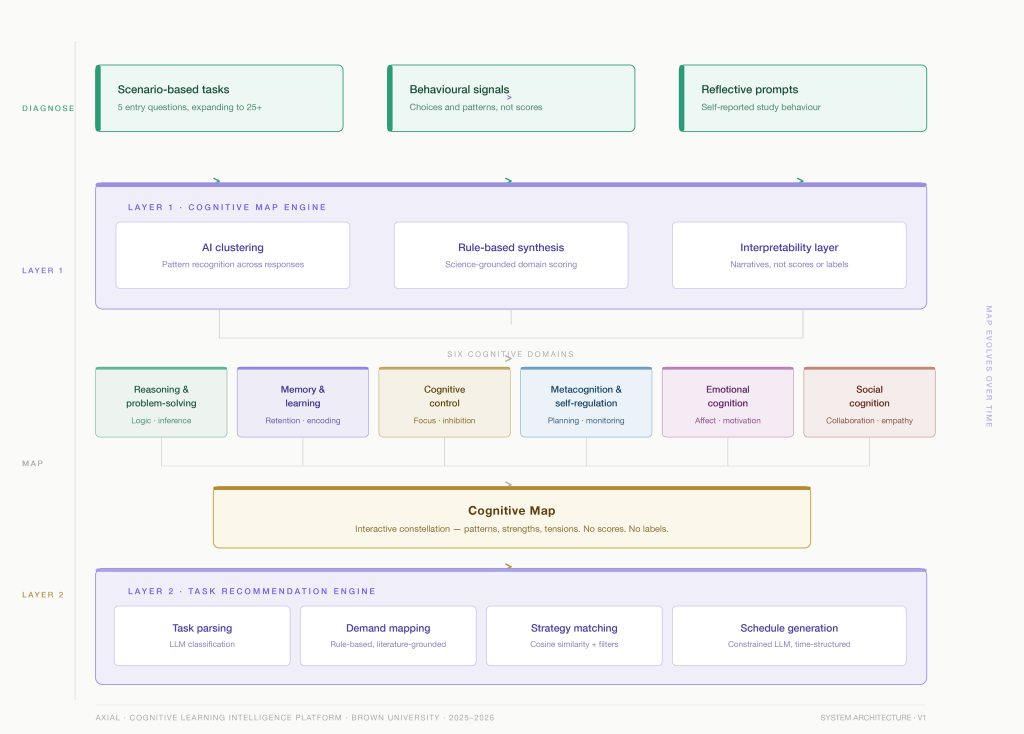

Axial runs as a two-layer personalization engine. Layer 1 builds who you are cognitively. Layer 2 converts that into what you should do right now.

Layer 1: Cognitive Map

A science-grounded profile derived from diagnostic signals, mapping a learner across six cognitive domains and their interdependencies. The map is built across three tiers anchored in the research literature:

- Processing style: how you take in and organise information (Cognitive Styles theory)

- Memory and load management: working memory capacity, susceptibility to overload, effective chunking strategies (Baddeley’s working memory model, Cognitive Load Theory)

- Learning strategies: what you actually do: planning, elaboration, self-testing, help-seeking (MSLQ framework)

Layer 2: Task Recommendation Engine

When a learner brings a real task (“biochemistry exam in 3 days”), the engine:

- Parses the task into structured cognitive demands via LLM classification

- Maps those demands to specific cognitive skills using a rule-based, literature-grounded database

- Matches task demands against the learner’s Cognitive Map using cosine similarity and rule-based filters

- Generates a time-structured study schedule: constrained LLM call guided by fixed inputs: profile summary, top strategies, and available time

The 6 cognitive dimensions

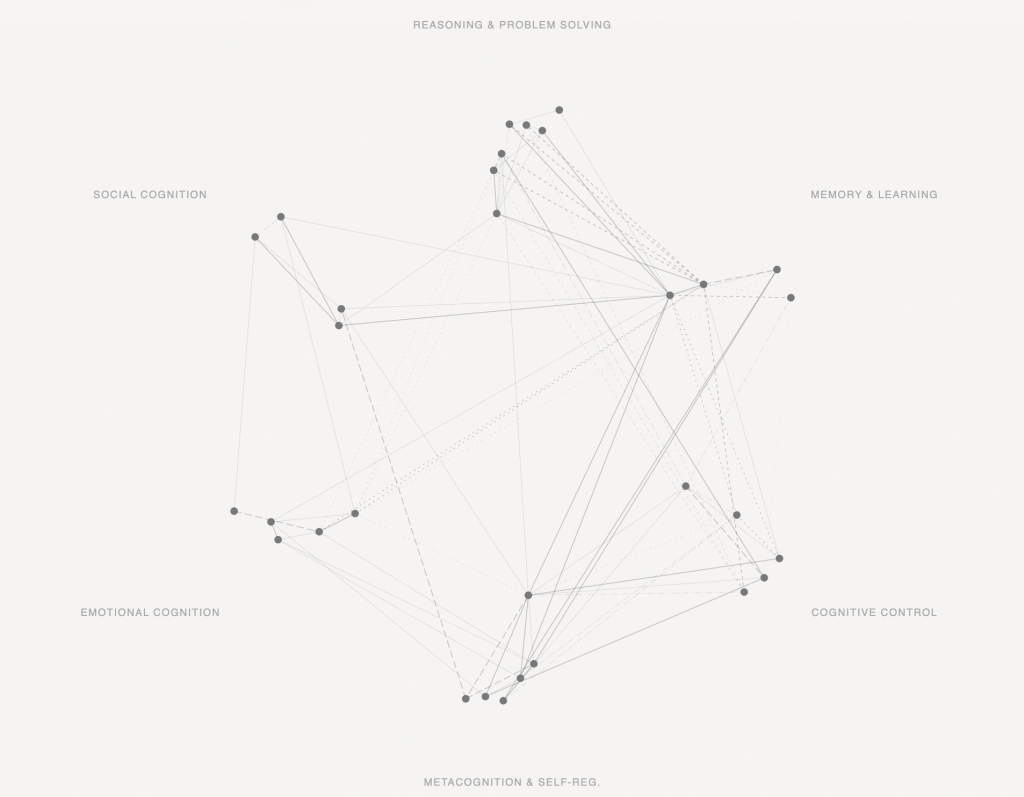

Rather than assigning a learning-style label, Axial maps patterns, strengths, tensions, and trade-offs across six domains, visualised as an interconnected constellation.

Reasoning and problem-solving Logic, inference, abstract thinking. How you approach novel problems under uncertainty.

Memory and learning Retention, encoding, retrieval. How you build and access stored knowledge.

Cognitive control Focus, inhibition, task-switching. How well you regulate attention and filter distractions.

Metacognition and self-regulation Planning, monitoring, self-correction. How well you understand and direct your own learning process.

Emotional cognition Affect, motivation, anxiety response. How emotional state interacts with cognitive performance.

Social cognition Collaboration, perspective-taking, empathy. How you process and work with others’ thinking.

A student might see strong visual-spatial reasoning and slower processing speed co-existing. That pattern has direct implications for how they should study and Axial surfaces it without labelling either as a deficit.

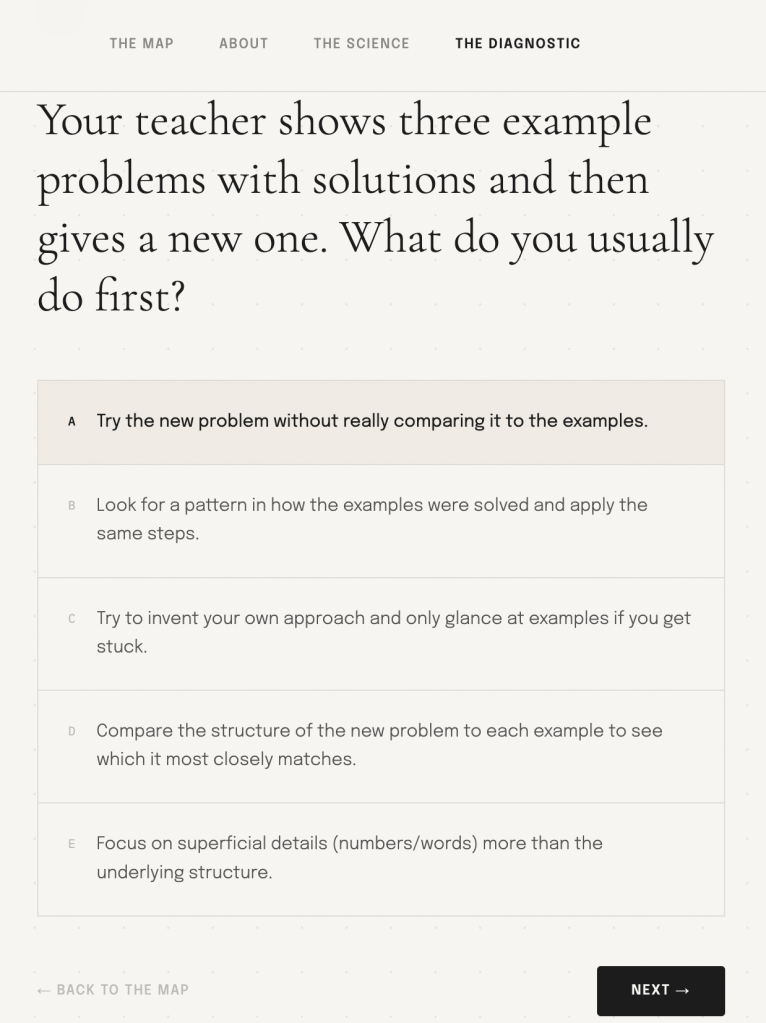

The Diagnostic

The diagnostic is short and approachable at entry: 5 scenario-based questions, expanding to 25+ for deeper profiling. It is explicitly not a personality test or an IQ test. Questions are framed as realistic study scenarios and behavioural choices, not correctness tasks.

AI is used for pattern recognition, clustering response patterns across the six domains. But Axial avoids numeric scores and evaluative labels deliberately.

Early testing showed that static trait labels introduced anxiety and identity distortion. The user sees patterns and narratives: “You tend to encode new material better when it is connected to existing knowledge before details are introduced”; not “63/100 on working memory”.

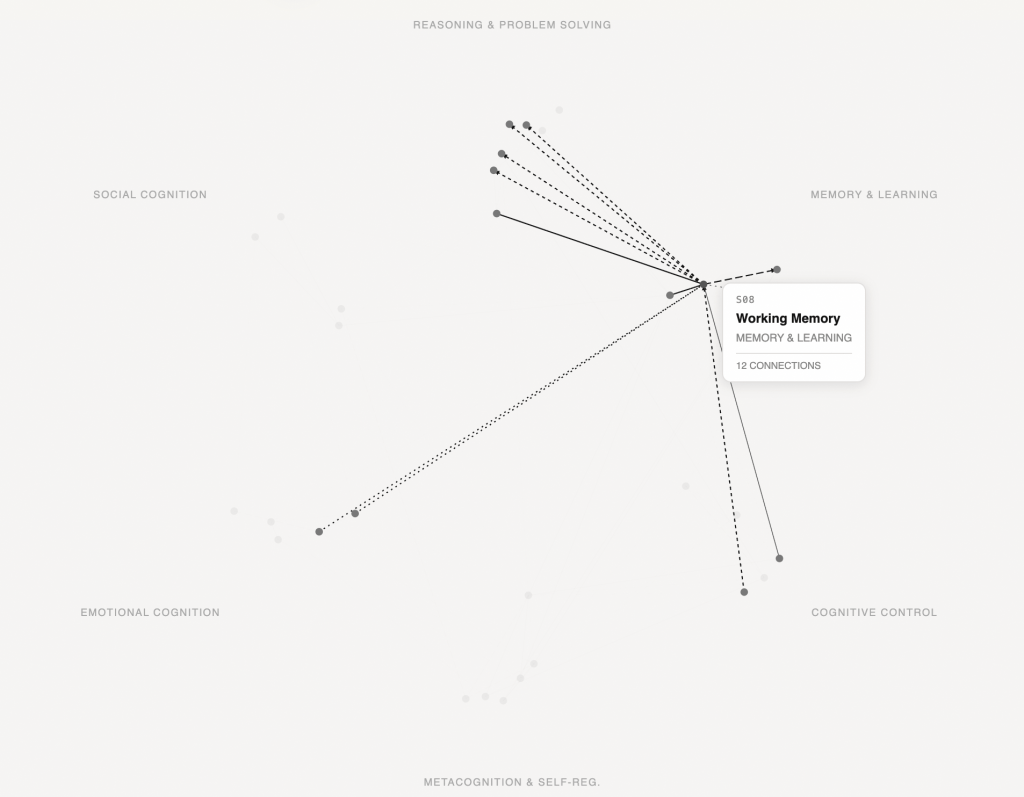

The Cognitive Map

The map is the core output of Layer 1 and the substrate for everything in Layer 2. It is interactive, visual, and deliberately non-evaluative; an interconnected constellation showing how a learner’s cognitive patterns relate to each other, not a ranked list of strengths and weaknesses.

Students can click on individual skills and view connections with other skills across domains and privacy is built into the data architecture:

- Students see their own map in full

- Educators see class-level aggregates and cognitive trends, not individual scores

- No student sees another student’s map

- Low-confidence model outputs trigger clarification prompts rather than silent inference

The AI Workflow

The platform’s visual system, onboarding content, pilot outreach, and diagnostic copy were all built using a structured generative AI pipeline; a documented sequence with human review at the critical junction before anything reached a student.

Create: Visual System

- Runway and Midjourney used to generate concept visuals and pilot demo assets across different learner contexts

- ComfyUI node-based workflows built for the platform’s visual system – modular control over outputs so any new learner context (different age group, different subject area) could be run through the same pipeline with only the conditioning inputs changed

Personalise: Video and Audio

- HeyGen used to produce personalised outreach videos, separate versions for school administrators, class teachers, and individual students

- ElevenLabs used for audio narration in product walkthroughs

- Both standardised into documented production processes for tonal consistency as the platform scaled

Automate: Production Pipelines

- Node graphs define: input type → model selection → sampling parameters → post-processing → output format

- Workflows documented so quality does not depend on any single session going well; repeatable at scale

Analyse: Copy and Diagnostic Generation

- Claude, GPT-4, and Sonar used across three tasks: generating diagnostic question sets, producing onboarding language, writing reflection prompts

- Prompt engineering frameworks built as structured templates (role, context, constraints, output format).

- Structured review protocols at every output stage: tone check (supportive, not clinical), bias scan (ability language, evaluative framing), safety review (student self-perception risk).

Why I built Axial?

Every system I have built – a sculpture that rewired itself from market data, a maze that grew from employee interviews, a colour algorithm that had to earn a colourist’s trust- has been a version of the same problem: how do you make something invisible legible without flattening it into a label?

Axial is the most direct version of that question I have worked on. Cognition is genuinely hard to represent. The temptation is to score it, rank it, turn it into something that feels conclusive. Resisting that and building a system that shows patterns rather than verdicts, that gets more useful the more someone uses it, has been the hardest and most interesting design constraint I have encountered. It is also the one I am most proud of not taking the easy way out of.